This ad will not display on your printed page.

In the heat of summer, a 19-second video from the World Economic Forum gathering in Davos, Switzerland, made the rounds on Twitter (now X). “The big political and economic question of the 21st century will be: What do we need humans for?” said Israeli historian Yuval Harari. “At present, the best guess we have is keep them happy with drugs and computer games.”

Harari’s broader writing suggests he wasn’t endorsing the future he publicly envisioned, and 19 seconds isn’t fair context for his fuller meaning. But whatever Harari’s intent, the question is a pressing one as artificial intelligence technology progresses to more useful stages.

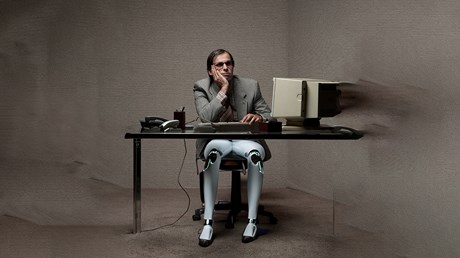

What are humans for? What is AI for? What problem—as author and Grove City College professor Jeffrey Bilbro recently asked in Plough—do we want ChatGPT and other AI toys and tools to solve? And will AI serve us well? Or will we conform to the machine?

I’ve been thinking about this in the context of my own work, because readers keep asking me if I think generative AI programs like ChatGPT will replace journalists. A few media companies, most notably BuzzFeed, have already announced they’ll use AI to pump out digital bagatelles at an even higher volume and lower cost than before. Will more serious outlets that produce hard news and careful analysis do likewise?

At the risk of sounding Pollyannaish, I don’t think so. AI will take over some journalism work, yes, but not the kind we should have been reading anyway. It won’t replace the war correspondent, the reporter at the school board meeting, the omnivorous public intellectual, the conversation-driving personal essayist. My guess is human writers will become a hallmark of high-quality and prestige media (which aren’t necessarily one and the same), much as intensive human service is a hallmark of luxury restaurant and hotel experiences now.

AI, meanwhile, will handle cheap digital news aggregation, scraping ...